Building A Visual Planetary Time Machine

June 10, 2013

Posted by Randy Sargent, Google/Carnegie Mellon University; Matt Hancher and Eric Nguyen, Google; and Illah Nourbakhsh, Carnegie Mellon University

Quick links

When a societal or scientific issue is highly contested, visual evidence can cut to the core of the debate in a way that words alone cannot — communicating complicated ideas that can be understood by experts and non-experts alike. After all, it took the invention of the optical telescope to overturn the idea that the heavens revolved around the earth.

Last month, Google announced a zoomable and explorable time-lapse view of our planet. This time-lapse Earth enables you explore the last 29 years of our planet’s history — from the global scale to the local scale, all across the planet. We hope this new visual dataset will ground debates, encourage discovery, and shift perspectives about some of today’s pressing global issues.

This project is a collaboration between Google’s Earth Engine team, Carnegie Mellon University’s CREATE Lab, and TIME Magazine — using nearly a petabyte of historical record from USGS’s and NASA’s Landsat satellites. And in this post, we’d like to give a little insight into the process required to build this time-lapse view of our planet.

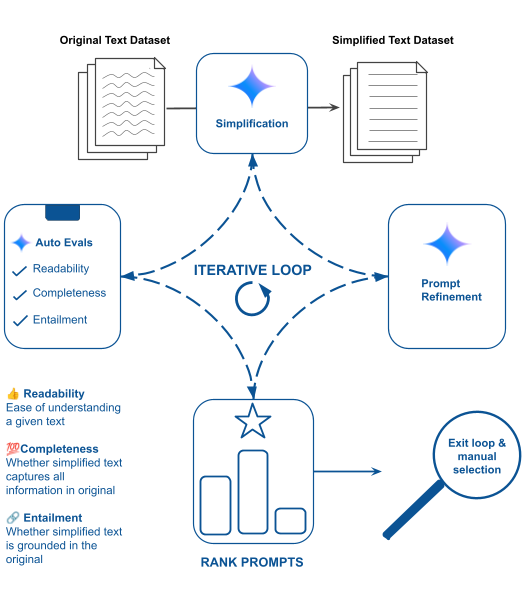

Previews of the phenomena visible in these time-lapses.

First we'll describe Google’s Earth Engine system for deriving the time-series imagery. Second, we'll tell you more about CMU’s open-source “Time Machine” software for creating and streaming large, explorable time-series imagery.

Annual Composites: Distilling a Massive Dataset

Google Earth Engine brings together the world's scientific satellite imagery — over a petabyte of multispectral imagery recording over 40 years of history — and makes it available online with tools that scientists, independent researchers, and nations can use to mine this massive warehouse of data to detect changes, map trends and quantify differences on the Earth's surface using Google’s computational infrastructure. Today, the platform is used to monitor the Amazon and estimate forest carbon in Tanzania, among hundreds of other partners developing new uses for the technology.

Using Earth Engine, we first built annual global mosaics at a resolution of 30 meters per pixel for each year from 1984 through 2012. We started with a total of 2,068,467 scenes from the Landsat 4, 5, and 7 satellites, comprising 909 terabytes of data. The Earth’s atmosphere is a constantly-shifting sea of clouds, so in order to assemble a seamless cloud-free view of each year we analyzed all the images available at each location and used a simple cloud model to separate out the clouds from the ground. To help correct for atmospheric and seasonal effects, we used an additional 20TB of data from the MODIS MCD43A4 product to build a cloud-free low-resolution model of the Earth over time. We combined all this to produce a statistical estimate of the color of each pixel for every year for which data was available. Producing the final 29 global mosaics took a bit less than a day and consumed approximately 260,000 core-hours of CPU.

Some areas of the planet are almost perpetually cloudy, obscuring satellite views. In addition, before the more capable Landsat 7 began operating in 1999, coverage in some areas of the world was sparse, particularly in Asia, for various operational and technological reasons. We wrestled with how best to visualize areas with missing or cloud-obscured images from each year. In the end, after much experimentation, we chose to simply interpolate between valid image years. Other techniques, such as greying out invalid data, created distractingly large artifacts, visually drowning out the valid information. However, the downside with the approach we have taken is that it can be difficult to tell which data is original and which is interpolated. We are exploring the possibility of including a view that allows drilling down into the non-interpolated, original mosaics.

"Time Machine": An HTML5 Time-Series Exploration Tool

Once we had produced the final global images, we adapted the Carnegie Mellon CREATE Lab’s open-source “Time Machine” software, which enables authoring, streaming, and exploring very-high-resolution videos. Time Machine videos take advantage of the power of HTML5 and modern web browsers: they are streamed as multiresolution, overlapping video tiles and displayed in a web page by manipulating the HTML5 <video> tag, in much the same way that Google Maps first demonstrated using the HTML <img> tag.

Examples of zoomable timelapses with hundreds of millions or billions of pixels per frame include documenting plant growth, bee colony collapse, and very-large-scale simulations of the universe. Time-lapse Earth, however, sets a new record for giant videos: each frame of the video is a global Mercator-projected map with a resolution of 30 meters per pixel at the equator, for a total of 1.78 trillion pixels per frame. That’s about a million times larger than a standard HD video stream. In order to scale to such large videos, we needed to integrate Time Machine’s data production pipeline into Earth Engine and the rest of Google’s infrastructure. Encoding the final video tiles consumed approximately 1.4 million core-hours of CPU in Google’s data centers over the course of about a day. For CMU's researchers, this would have been impossible without Google's resources.

Combining all three phases of product generation:

- Total processing time: 3 days

- Total CPU usage: 1.8 million core-hours

- Peak CPU usage: 66,000 simultaneous cores

|

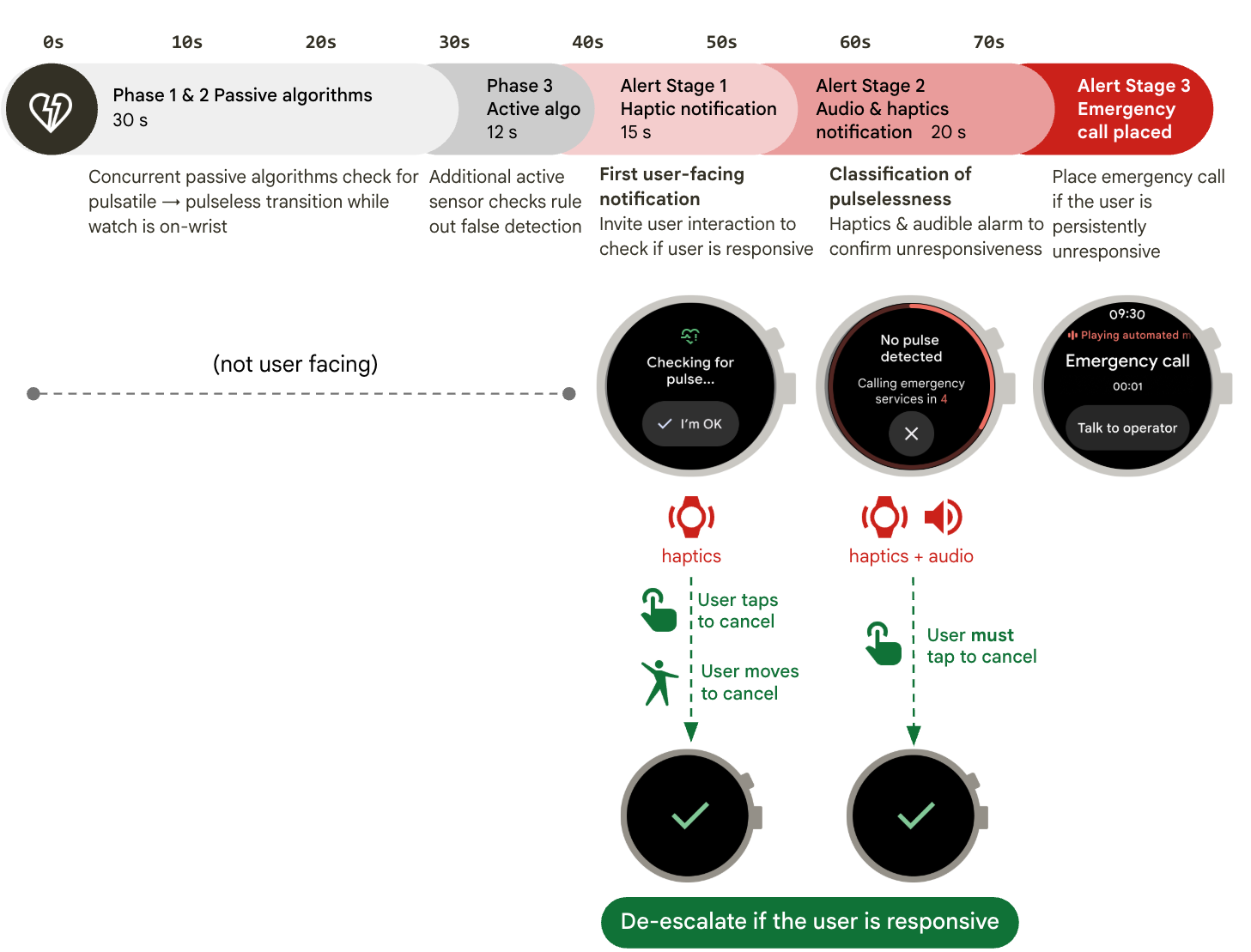

| Destination locations of top 1500 share links, weighted by number of visits. |

Time-lapse Earth is powerful because it helps us to access and construct the story of our planet. That story will become richer with each release, as we continue to improve fidelity and add data. The story-teller is everyone — scientists and citizens alike provide the real value by interacting, exploring, layering their knowledge upon the globe, and sharing their insights so that we can all better understand our world.

We are especially proud of the collaboration that made time-lapse Earth possible, and believe it to be an exemplar of how industry, academia, government, and the press can benefit from working together deeply over a period of years. By drawing on the strengths of each member of the collaborative community, Google strives to integrate the world's technical expertise and knowledge in order to tackle innovative and groundbreaking projects. In doing so, it is our goal to deliver an impactful service, one that can put a focus on the dramatic effect we are having on our planet.

-

Labels:

- Product